Continuing education is a factor in employee satisfaction

Learning & Development is a Satisfaction Driver—and a Competitive Advantage In many organizations, learning and development is still treated as a

In the late 1960s, Melvin Conway submits a paper on computer manufacturers and compiler design to the Harvard Business Review. They reject it -- insufficient evidence. It appears in Datamation in 1968. For the next few decades, almost nobody notices.

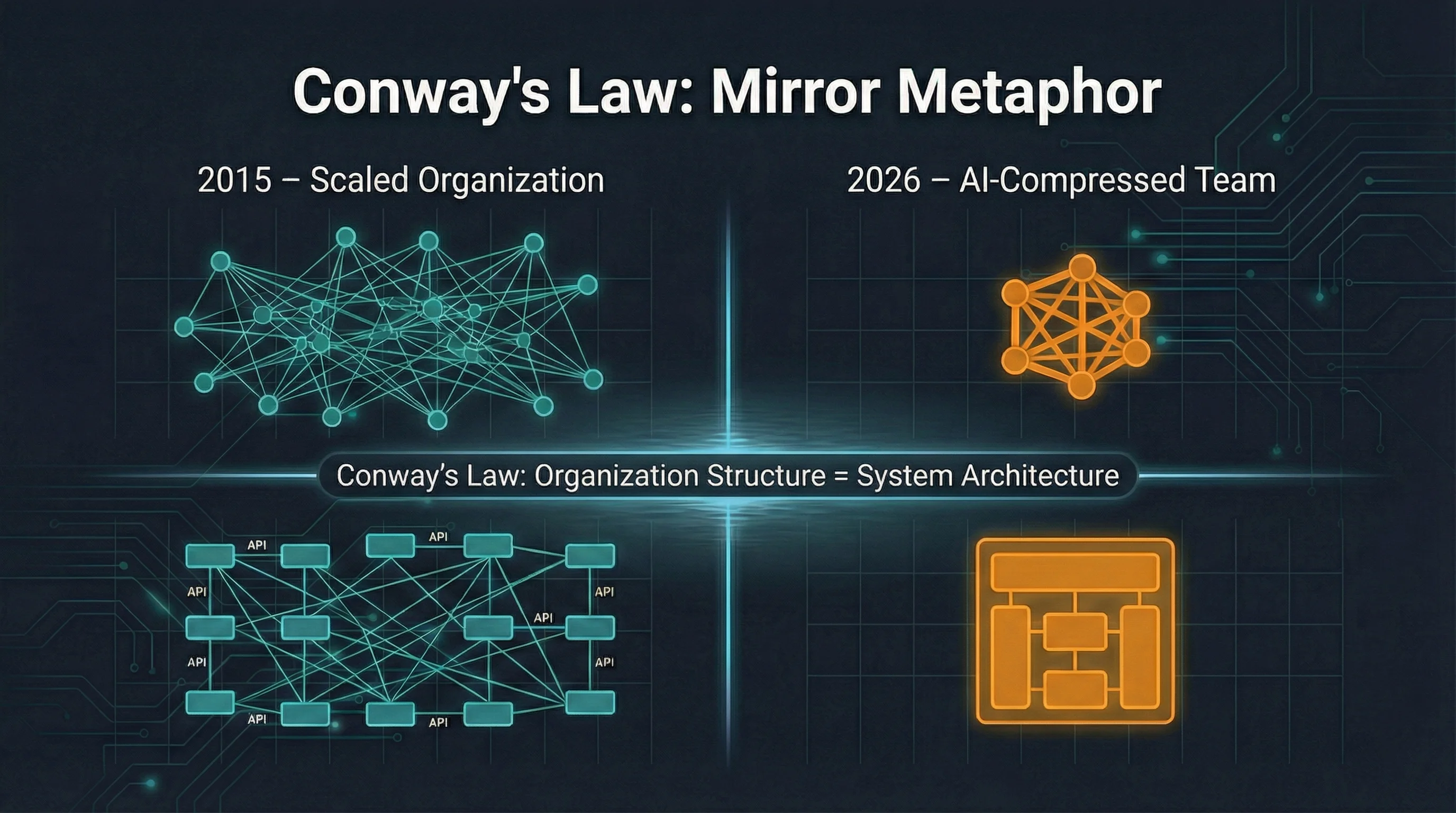

Then the microservices paradigm of the 2010s arrives, and suddenly everyone cites Conway. "Organizations which design systems are constrained to produce designs which are copies of the communication structures of these organizations."1 No longer sociology. Explanation.

What interests me: what happens to this law when AI changes the communication structure of development teams?

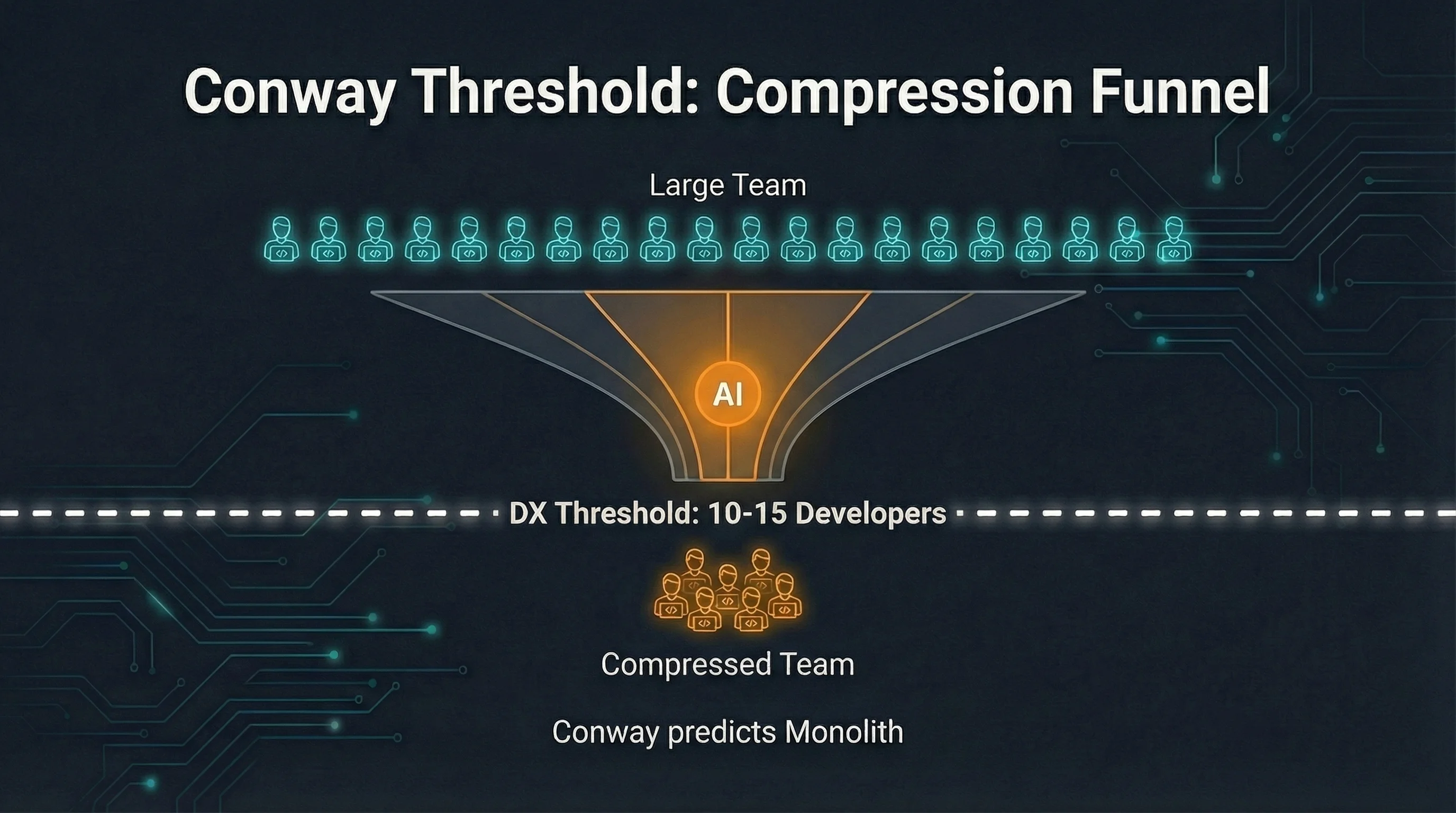

Martin Fowler frames the operational consequence like this: a dozen to twenty people can communicate deeply and informally, so Conway's Law predicts a monolith for them. And that's fine.2

This isn't a nostalgic plea for old architecture patterns. It's a causal claim. Microservices don't emerge because engineers find them more elegant. They emerge because organizations grow large enough that informal communication breaks down. You need hard API boundaries because you need hard team boundaries. Architecture follows communication structure -- not the other way around.

In 2015, that was the right answer for scaled tech organizations: hundreds of engineers, dozens of teams, distributed ownership. Separate deployment cycles per service made sense because the teams were already working separately. Conway wasn't the argument for microservices. Conway was the description of why they became the obvious choice.

Matthew Skelton and Manuel Pais turned this observation into a design tool in Team Topologies: the Inverse Conway Maneuver3. The idea: if architecture follows team structure, then deliberately shape team structure to get the architecture you want. That turns Conway from diagnosis into directive. And it also means: when team structure changes -- through AI, through market pressure, through deliberate decision -- the architectural target state changes with it.

Now comes the AI question.

Bolt.new: 15 engineers, $40 million ARR.4 Cursor: roughly 300 employees, over a billion dollars in annualized revenue.5 These are numbers that classically scaled SaaS companies with thousands of people don't reach. Both build with intensive AI use. Both are radically small for what they deliver.

The strongest argument against making a general thesis out of this: Bolt.new and Cursor are greenfield builds without legacy, without compliance overhead, without the regulatory requirements of an Austrian financial institution. The radical efficiency gains of these companies are not a representative sample. They're outliers, not the average.

That's correct. And yet.

The METR study6 measures with experienced open-source developers in a randomized controlled experiment: AI tools increased measured working time by 19%, not reduced it (n=16, 246 tasks). The surprising part: participants believed they were faster. Perceived and measured productivity were far apart. DORA 20247 shows: when AI adoption in teams increases by 25 percentage points, estimated throughput drops by 1.5% and stability by 7.2%.

I read this not as a refutation of the compression thesis but as a refinement: AI shifts the bottleneck. Code gets cheaper. Review, CI, coordination, and verification get more expensive. Kent Beck, Laura Tacho, and Steve Yegge put it this way at a gathering around the 25th anniversary of the Agile Manifesto in 2026: "We remain skeptical of the promise of any technology to improve organizational performance without first addressing human and systems-level constraints."8 Teams that don't address this explicitly gain on one end and lose on the other.

What does this mean for Conway? Team compression through AI is happening, but not uniformly, and not everywhere at once. The effect is real and demonstrable in greenfield startups. How it plays out in enterprise legacy with compliance requirements is still open. From here it gets somewhat more speculative.

Gergely Orosz investigated how Cursor is structured internally. The answer: "mostly a large monolith... deployed as one."9

You could read that as an isolated architecture decision. I read it as Conway confirmation. Cursor is a tightly communicating team building tightly coupled systems. Conway would predict exactly that for a team this size. It doesn't prove the monolith is the reason for their success. But it fits the thesis.

DX research provides an operationalizable heuristic: microservices "excel when teams grow beyond 10-15 developers."10 Below that, the overhead outweighs the benefits in most contexts: separate deployment pipelines, service discovery, network latency, distributed tracing. Not an absolute, but a usable threshold for an initial assessment.

The arithmetic then goes like this: if AI effectively halves teams -- through productivity gains or through deliberate downsizing -- organizations drop below this threshold. And Conway says: then you build monoliths, whether you want to or not.

In 2015, you needed a justification for the monolith. "We're starting small." "Haven't found a clean split yet." "Product-market fit first, then architecture." Those were the rationalizations. Microservices were the target state. The monolith was technical debt in waiting.

That has flipped. For teams that are actually getting smaller or staying small.

This isn't a new argument. DHH has been advocating for the "Majestic Monolith"11 for years and published a concrete guide in 2023 on how organizations can recover from prematurely adopted microservices12. Amazon Prime Video moved a monitoring service from microservices to monolith in 2023 -- 90% cost reduction13. Kelsey Hightower has framed the core since 2020: microservices are not a best practice, they're a tradeoff -- and that tradeoff needs honest evaluation based on actual conditions, not industry fashion14.

This "re-evaluate on schedule" is the actual point. And that's precisely why now is the right moment: AI is changing team sizes. Anyone who built their architecture decision on assumptions about team structure -- and most did -- needs to check whether those assumptions still hold. Not because monoliths are better. But because the input variable has changed.

The DX heuristic is operationalizable10: below ten to fifteen people on the development team, microservices as a default isn't justified by team structure alone. You need other reasons: significantly different deployment frequencies, different scaling requirements, organizationally separate ownership. Those exist. But they now need to be explained.

The question is no longer "What services do we need?" but "How big is our team really, and how does it communicate internally?" Conway knows the answer once you tell him the team size.

What I observe in trainings and customer conversations: the question "monolith or microservices?" is rarely asked explicitly. It's answered implicitly by team size. Sometimes consciously, mostly not. The problem is the distributed monolith that emerges when the decision stays unconscious.

Technically deployed separately, but functionally coupled so tightly that no service can be changed without touching five others. The worst of both worlds. Kelsey Hightower predicted this in 2017: "Monolithic applications will be back in style after people discover the drawbacks of distributed monolithic applications."15 Conway would have said the same thing, just more formally: you choose the microservices form but keep the monolith communication structure. The result isn't a compromise. It's an anti-pattern.

The real recommendation here isn't an architecture decision. It's a diagnostic question: does your architecture still fit your team size?

Three steps for Monday morning.

First, map the communication structure. Who talks to whom daily? Where are the informal knowledge holders? Where do bottlenecks form because someone has to wait for someone else? That's Conway's Law as a diagnostic tool.

Second, apply the DX threshold. If the development team is below ten to fifteen people -- through AI efficiency, through choice, through market pressure -- microservices is no longer the automatic default. Microservices now need an active justification, not a free pass.

Third, watch for the distributed monolith. If deployment units are technically separate but change frequency stays synchronized, if a deployment requires three coordination meetings, then you don't have a microservices advantage. You have microservices overhead without microservices benefit.

A well-written monolith that's comprehensible and changeable for a small team beats a poorly managed microservices system. Every time. The question is whether you made that decision explicitly, or whether Conway's Law is making it for you.

This post is part of a series on AI and software architecture. The previous parts cover the Five Levels of AI Development, Dark Factory Architecture, and the Dark Factory Gap.

Text generation with Claude Opus 4.6. In practice, that doesn't mean "AI writes, human approves" but an iterative dialogue: I bring the thesis and core sources, the model writes an initial draft, I rework structure and argumentation, and then we go into research together -- which voices are missing, which counterarguments aren't addressed strongly enough, which references would improve the article.

I describe the full workflow in AI-Assisted Knowledge Work: How I'm Rebuilding My Research and Writing Process.

Conway, Melvin E. (1968). How Do Committees Invent? Datamation 14(4), April 1968. melconway.com; PDF: melconway.com ↩︎

Fowler, Martin (2022). Conway's Law. martinfowler.com. martinfowler.com ↩︎

Skelton, Matthew & Pais, Manuel (2025). Team Topologies. 2nd Edition. IT Revolution. teamtopologies.com ↩︎

Neu-Ner, Lior (2025). From $0 to $40M ARR: Inside the Tech Stack and Team Behind Bolt.new. PostHog. posthog.com ↩︎

Cursor (2025). Series D Announcement. November 13, 2025. cursor.com ↩︎

Becker, Sören et al. / METR (2025). Measuring the Impact of AI on Experienced Open-Source Developer Productivity. arXiv:2507.09089. arxiv.org ↩︎

Google Cloud / DORA Team (2024). Announcing the 2024 DORA Report. cloud.google.com; full report: dora.dev ↩︎

Beck, Kent, Tacho, Laura & Yegge, Steve (2026). Cited in Orosz, Gergely. The Future of Software Engineering with AI: Six Predictions. The Pragmatic Engineer. pragmaticengineer.com ↩︎

Orosz, Gergely (2025). Building Cursor: The $2.5B AI Code Editor. The Pragmatic Engineer. pragmaticengineer.com ↩︎

DX (2025). Monolithic vs microservices architecture: when to use which. DX Blog. getdx.com ↩︎ ↩︎

DHH (2016). The Majestic Monolith. Signal v. Noise. signalvnoise.com ↩︎

DHH (2023). How to recover from microservices. world.hey.com ↩︎

Kolny, Marcin (2023). Scaling up the Prime Video audio/video monitoring service and reducing costs by 90%. Amazon Prime Video Tech Blog. Original no longer available; summarized at infoq.com. ↩︎

Hightower, Kelsey (2020). Monoliths are the future. Go Time #114 / Changelog. changelog.com ↩︎

Hightower, Kelsey (2017). Tweet. x.com; archived at web.archive.org ↩︎

You are interested in our courses or you simply have a question that needs answering? You can contact us at anytime! We will do our best to answer all your questions.

Contact us