Dark Factory Gap: What Happens to Teams, Roles, and Organizations

In Part 1 of this series, we worked through the why: Shapiro's five levels of AI development, Brynjolfsson's J-Curve, and the core thesis that AI tools alone

In Part 1 of this series, we worked through the why: Shapiro's five levels of AI development, Brynjolfsson's J-Curve, and the core thesis that AI tools alone don't deliver productivity -- workflow redesign does. In Part 2, we unpacked the how: Spec-Driven Development, Digital Twin Universe, Scenarios as Holdout-Set. StrongDM as the reference implementation -- three people, three Markdown files, tens of thousands of lines of production code.

Now comes the who. What happens to the people inside this transformation? To the roles we know? To the teams and organizations that are supposed to live through this change?

This is the question that gets skipped in most AI adoption discussions. Not because nobody knows the answer -- but because the answer is uncomfortably complex. No easy narratives ("AI makes all developers redundant") and no soothing ones ("AI creates more jobs than it destroys"). What I observe is more nuanced -- and for organizations in Germany, Austria, and Switzerland, there are genuinely good reasons to see the situation soberly, but not pessimistically.

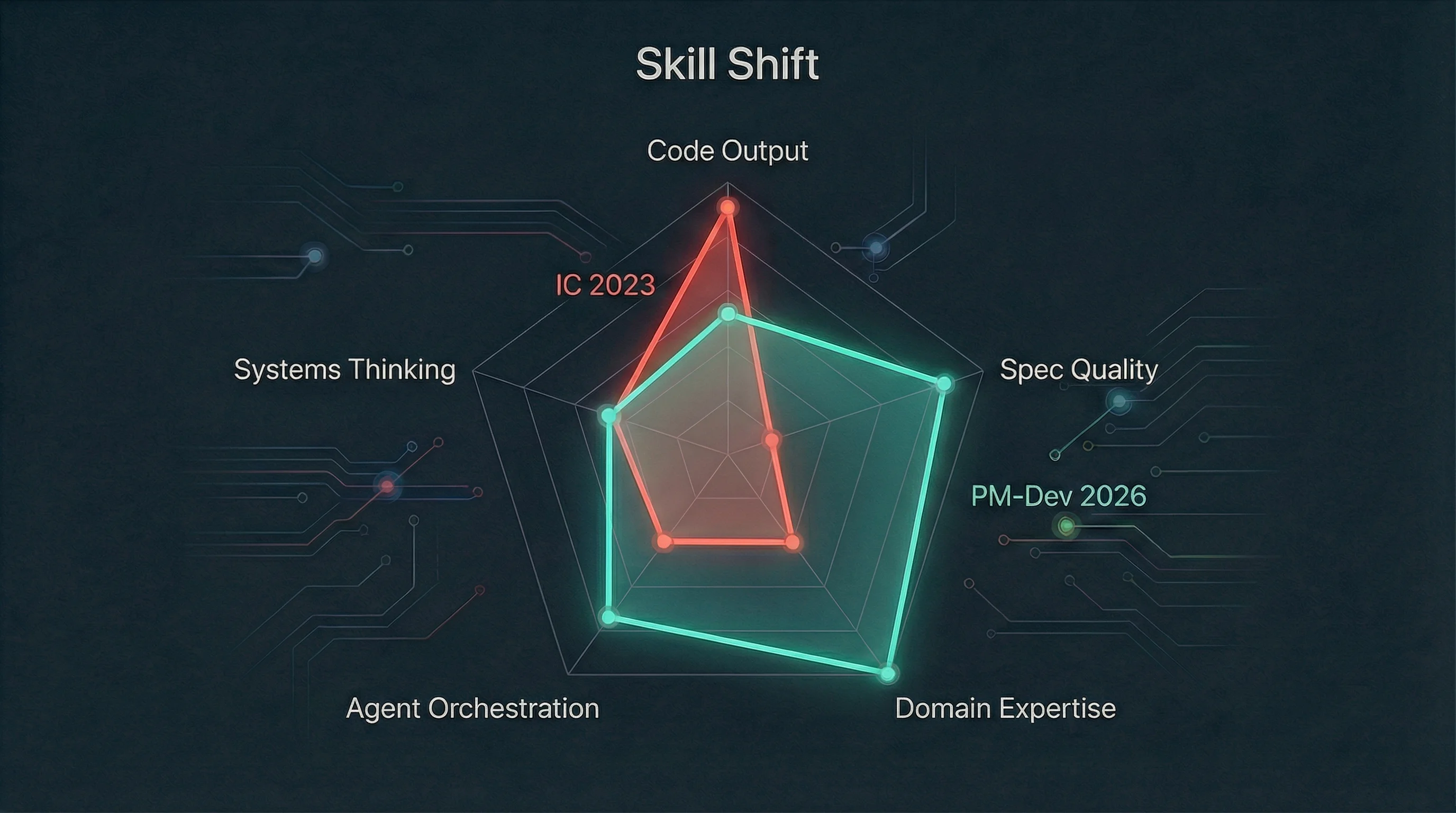

McKinsey's November 2025 analysis1 describes a new role archetype, citing Cursor CEO Michael Truell: ICs who direct "a junior team of asynchronous agents" -- a blend of Engineering Manager and Individual Contributor. I call this the "PM-Developer": someone who no longer primarily writes code, but orchestrates agents. That's not a futurist statement -- it's a description of a transition already visible in early-adopter teams.

What does that mean concretely? Level 4 in Shapiro's framework -- the "Robotaxi mode" where developers write specs during the day and come back to finished code in the evening -- requires a role definition that the classic "Individual Contributor" simply doesn't fill. Writing code and formulating requirements precisely enough for a machine to implement them reliably are both good software skills -- but they're not the same skill.

InfoQ described Spec-Driven Development in January 20262 as "an architectural pattern that inverts the traditional source of truth by elevating executable specifications above code itself." GitHub has published its open-source Spec Kit3; Google's Antigravity project4 is built around it. The pattern is converging: code is no longer the primary product. The spec is. And writing the spec is a different skill than writing the code -- closer to product management, closer to domain engineering.

CIO.com put it pointedly: "The agile team now consists mainly of humans who all share one basic role: Specifying what AI agents should do." That sounds reductive -- and still isn't entirely wrong. The role diversification at Level 4 is real: Spec-Writer, Agent-Orchestrator, Quality-Validator, Domain-Expert. But all share the core task of telling machines, intelligibly, what is expected.

An observation I think is important: most organizations haven't changed their roles and processes despite AI tools. That's not failure -- it's a rational response to unclear signals. Installing Copilot on top of existing processes and saying "it runs" isn't a lie. The tool does run. That the underlying process remained unchanged doesn't make the short-term decision wrong -- just suboptimal in the medium to long term.

McKinsey's "Unlocking the value of AI in software development"1 (November 2025) quantifies the difference: a 15-percentage-point performance gap between the most and least AI-effective software organizations studied. McKinsey explicitly describes the leading characteristic of the stronger side: focus on "structured communication of specs" and "problem framing and intent specification." Not tool selection. The ability to formulate requirements precisely.

These organizations have time -- but not unlimited time. Competitive pressure comes not from an abstract technological shift, but from concrete organizations that have already made the transition.

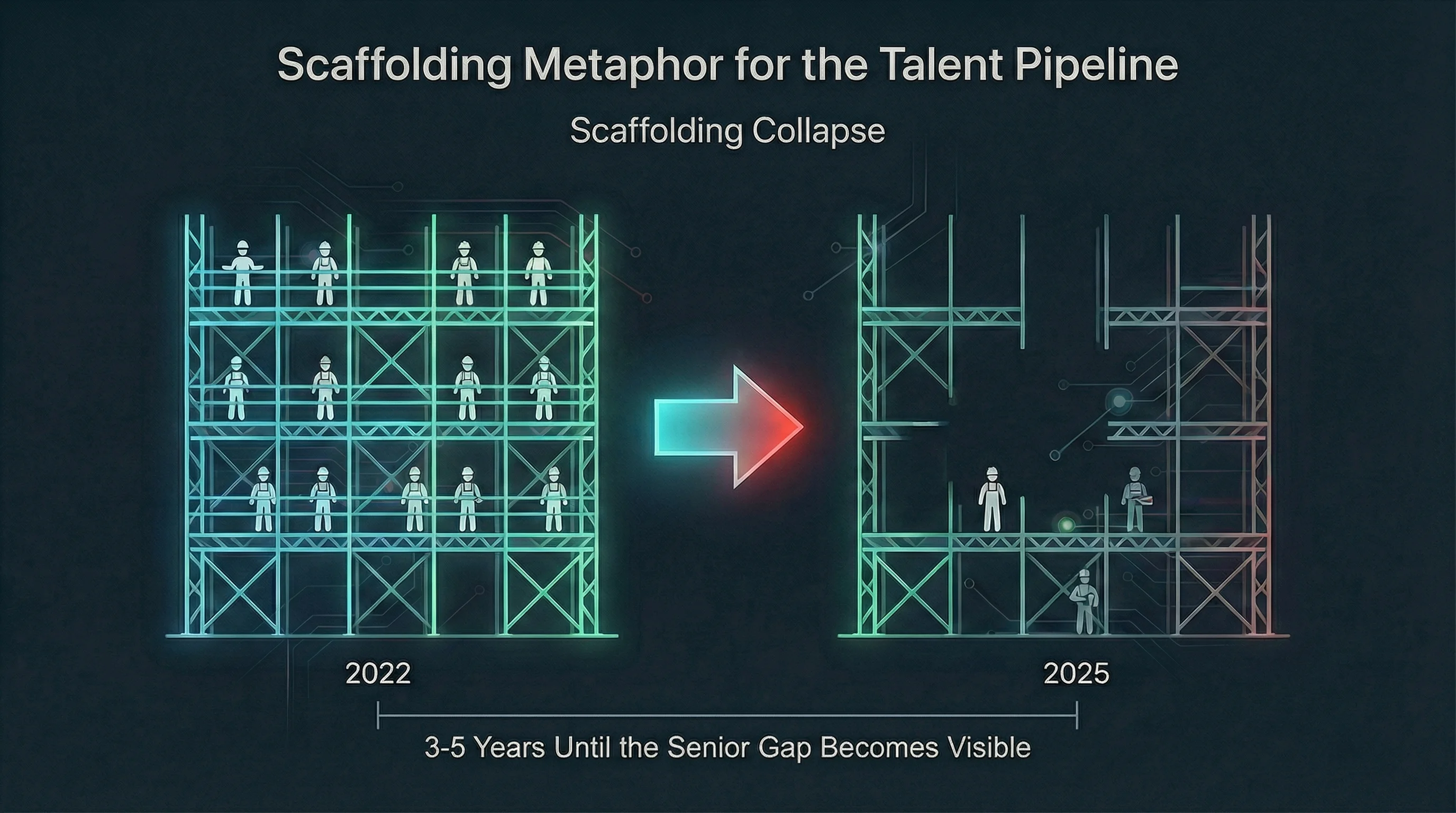

This is the topic that concerns me most. Not because it's more dramatic than others -- but because the consequences become visible most slowly, and are therefore most underestimated.

A study by Hosseini Maasoum and Lichtinger5 (Harvard, August 2025, SSRN 5425555) analyzed the US software development labor market using a difference-in-differences methodology: in AI-adopting firms, junior employment fell by 7.7% relative to non-adopting firms over six quarters starting Q1 2023. Important caveat from the authors: the junior hiring decline began in 2022 -- in parallel with Fed rate hikes, before broad AI adoption. Causality isn't clean to separate. What the study shows: existing juniors weren't laid off -- promotions actually increased. What fell was the hiring rate for new juniors.

Other data confirms this. ISE figures, reported by The Register6 among others, show a 46% decline in UK tech graduate positions for 2024, with a further projected decline of 53% by 2026. High Fliers Research7 records a 14.6% decline overall for the 100 largest UK employers -- the steepest drop since the 2009 financial crisis. Various analyses of Indeed and FRED data8 show that US software developer job postings are roughly 67-70% below their 2022 peak (the exact figure varies by definition and time period). Brynjolfsson, Chandar and Chen measure in "Canaries in the Coal Mine"9 (November 2025) a methodologically more tightly defined 13% relative decline for the 22-25 age group.

Most of these studies come from the US and UK markets. A comparable difference-in-differences analysis for DACH is absent. But the direction is visible there too: Indeed Hiring Lab Germany10 shows a significant decline in junior software developer postings since 2020 -- while the decline for senior positions is much smaller. The gap between junior and senior demand is widening significantly in Germany as well.

Molly Kinder from the Brookings Institution11 framed the problem sharply in January 2026: "To save entry-level jobs from AI, look to the medical residency model." Dario Amodei (Anthropic) and Demis Hassabis (Google DeepMind) both independently warned at Davos 202612 about AI's impact on entry-level jobs. That's not standard corporate communication.

What's at stake here isn't just cheap labor. Junior developers are the knowledge transfer system of the industry.

You enter as a junior. You handle tasks that are too expensive for seniors -- boilerplate, simple bugfixes, documentation. In the process, you learn the system, the conventions, the historical decisions. Juniors become mids, mids become seniors. The next generation of junior mentors and senior architects comes from today's junior cohorts.

If the junior pipeline collapses, that becomes visible in three to five years at the senior level. Not as a wave of layoffs, but as an experience gap. Organizations that stop training today will, in five years, be searching for seniors who have never trained juniors.

The Harvard study5 shows that existing juniors are actually being promoted faster -- that sounds positive. But acceleration without mentoring depth is a different risk: titles without proportional experience. That's not speculation -- it's the logical downstream consequence of "fewer juniors in the system, same senior demand."

There's also a finding from ZEW Mannheim13 (Schlenker and Brüll, 2025): over 60% of employees in Germany already use AI at work -- including in companies without formal AI rollout, as so-called "Shadow AI Usage." The logical consequence -- and here I'm moving beyond the empirical ZEW finding into my own inference -- is a growing "verification burden": as AI-generated code becomes the norm, the core task shifts from writing to critical reviewing. Organizations need developers who act as skeptical editors. But that presupposes exactly the depth of experience that juniors by definition don't yet have. The requirement shifts from "write code" to "verify AI output" -- and that systematically favors seniors.

Kinder's medical residency framing is precise: a system that doesn't train professionals through structured, supervised practice doesn't produce professionals. It buys them -- and when the market tightens, prices rise or quality drops.

In Germany, Bitkom14 measured roughly 109,000 unfilled IT positions in a 2025 study of 855 companies -- a decline from the record of 149,000 in 2023. According to Bitkom, that decline isn't a sign of improvement but a hiring freeze effect. Crucially: the majority of these open positions target experienced specialists, not entry-level. The Hays Skills Index Q4 2024 confirms the trend: specialized IT segments remain demand-resistant while general IT demand declines -- a pattern that hits junior profiles disproportionately hard. At the same time: 27% of surveyed companies expect AI to reduce headcount; 42% expect AI to generate additional IT demand. Both dynamics simultaneously -- not a contradiction, but an indication that the shift runs along experience levels.

Only 5% of German companies are already using AI to substitute IT headcount14. Among large companies (250+ employees), it's 21%. SMEs are well below that -- meaning the real transformation pressure in the German Mittelstand is still ahead.

In May 2025, Henry Shi and Jeremiah Owyang published the "Lean AI Native Leaderboard"15 -- a curated benchmark analysis, not a peer-reviewed dataset: the ten leading AI-native startups achieved an average of $3.48 million in revenue per employee, with an average team size of 24 people.

The individual examples are striking. Cursor: over 1 billion dollars in annualized revenue (November 2025, according to their own Series D post16), roughly 300 employees -- that's 3.3 million dollars per head. Midjourney: highly profitable by various estimates with a small team of around 100, without external investment -- one of the few fully independent AI companies. Lovable: 200 million dollars ARR (November 2025), roughly 100 employees.

For comparison: SaaS Capital 2025 reports a median of 130,000 dollars revenue per employee for private SaaS companies17; scale-SaaS companies above 50 million dollars ARR target around 300,000. According to Owyang and Shi, AI-native firms operate with "7-8x fewer employees per revenue dollar" than traditional SaaS companies.

These numbers have often led to a conclusion in public discourse that I think is wrong: everyone gets laid off, enterprise teams shrink to groups of three.

That's not right -- for several reasons. First, AI-native startups are greenfield builds. They start with the assumption that agents write code; legacy structures, governance requirements, regulatory workflows are absent. An Austrian financial institution can't restructure its IT department to the StrongDM model without redesigning half its compliance apparatus.

Second, $3.48 million per employee is the upper end of a distribution, not the new median. It's like benchmarking against the Champions League when you're playing in the second division -- useful as orientation, not as an immediate target.

Third -- and this is what I find most important -- these numbers say nothing about absolute headcounts. An organization that previously needed ten developers and now manages with seven has shifted the ratio, but hasn't eliminated it. The new equilibrium sits somewhere between "same headcounts, significantly more output" and "drastically smaller teams, same output" -- where exactly depends on domain complexity, regulatory requirements, and brownfield burden.

What the numbers do show: the equilibrium assumptions for team size and revenue per head are shifting. Anyone doing headcount planning on 2022 assumptions is planning past reality.

Agile was a response to waterfall -- to long planning cycles, rigid requirements documents, late feedback. The solution: short iterations, tight feedback loops, Working Software over Comprehensive Documentation. For human teams, that was right.

For agents, it's the wrong premise.

AWS has introduced "Bolts" in their DevOps Blog as a concept: shorter work cycles, measured in hours rather than weeks. "Epics" become "Units of Work." The sprint as a two-week rhythm of human team coordination loses its justification when agents work asynchronously and in hours rather than days. P3 Group18, a German consulting firm, described the transition from "Sprints to Swarms" in September 2025 as three paradigms: Agent Swarms, Orchestrated Pipelines, Prompt-Chained Workflows. Their verdict: "Traditional Scrum becomes a strategic liability" in Level 4 environments.

Ethan Mollick19 (Wharton) described StrongDM's radical approach20 -- no sprints, no standups, no Jira, no human code review -- as "truly radical" and simultaneously argued that more such leapfrog ideas are needed: not integrating AI into old processes, but redesigning processes from scratch. The numbers he cites support this: AI layered onto existing processes yields 5-15% gains; fundamental process redesign yields "dramatically more." Shapiro estimates21 -- and I share this assessment -- that it's not more of the same but a different order of magnitude.

This makes methodological sense. Agile solved the coordination problem of human teams: how do you synchronize many individual work streams? Sprints, standups, and Jira are coordination instruments. Agents don't need coordination via sprints -- they need specification clarity. The methodology has to reflect that.

I use the term cautiously, because it's easily misunderstood. It's not about abolishing iterative work -- it's about the fact that iterations no longer need to be built around human communication cycles.

Spec-Driven Development isn't waterfall. It's agile in a new sense: specs iterate quickly, agents implement almost immediately, holdout scenarios validate continuously. The iteration happens on the spec, not on the code. That's a different rhythm -- faster in some dimensions, more demanding in others (spec quality as a continuous discipline, not a one-time artifact).

What's missing in many teams beginning the transition: abolishing Jira is easy. Building a spec culture in which requirements are precise enough for an agent to work with is hard. That requires different skills, different review processes, different quality gates.

This is the assessment where I diverge most strongly from the pessimistic mainstream discussion. The DACH region has structural advantages that barely appear in the global debate.

AI transformation is not just tooling -- it's primarily process and role restructuring. When new AI-assisted processes and work areas emerge, the works council (Betriebsrat -- the mandatory employee representation body in German-speaking countries) belongs on the map as its own workstream from the beginning -- similar to security, compliance, or architecture. Bring them in early, make the control and data framework transparent (e.g., logging, evaluations, monitoring boundaries), and plan qualifications and transitions explicitly: this reduces escalation risk, makes decisions more robust, and accelerates adoption because later blockages and "surprises" become rarer.

Note: There is already case law and a clear legal framework around this -- including co-determination questions around monitoring and work methods22. I'm not a lawyer; this is a management recommendation from a transformation perspective. For the concrete implementation, a thorough employment law review and proper alignment with the works council and HR are worth the investment.

Molly Kinder's residency model proposal for entry-level jobs connects with a structure that has existed in DACH for decades: the dual apprenticeship system. Apprentices split their time between an employer and a vocational school, with formal training contracts and certified outcomes -- structured, supervised practice through real work.

CEDEFOP23 observes that Germany is actively integrating AI as "key VET competence" into existing training frameworks -- no restart, just curriculum update. TRUMPF24 introduced a dual-study program in "Data Science and Artificial Intelligence" in 2025 -- an early example of how industrial companies are integrating AI competencies directly into training structures.

The data shows that the dual system is actually functioning as a counterweight to the open junior market. In Switzerland, ICT apprenticeship contracts rose by 8.6% in 2024 to 3,623 new contracts -- with a 96% satisfaction rate25. In Austria, the number of IT apprentices in their first year of training has nearly doubled since 2017: from 520 to 968 (2023)26. While the open market for university graduates collapses, the structured training pipeline is growing. That's not coincidence -- it's the difference between "we hire when we need someone" and "we train because we know we'll need someone."

Kinder's core argument is that juniors must be trained through structured, supervised practice -- exactly what the dual system structurally delivers: supervised learning through real work, with defined training contracts, with an institutional framework. That's not coincidence, it's a design advantage of the system.

The challenge remains: what is "real work" for an AI apprentice when AI itself handles most of the technical execution? The answer lies in spec writing, domain engineering, quality validation -- skills that can be built through supervised practice. The dual training system needs to adapt its content definition, but has the framework to do it.

The data on early movers in the DACH region is real and significant.

Deutsche Telekom27 reports that roughly 66,000 employees participated in AI training in 2023; in 2024, 30,000 internal users received hands-on training in AI use (prompting). CEO Tim Höttges speaks of over 100,000 colleagues trained in AI since 2023. Simultaneously, a "Junior Software Development Academy" is running that retrains customer service advisors as developers. 5,400 apprenticeship positions are planned for 2025/2026.

SAP28 has announced a target of 12 million people trained externally in AI by 2030 and has built an extensive portfolio of free AI courses -- ecosystem development at scale.

Siemens29 has announced 1,700 new apprenticeship positions for 2025 and explicitly integrates "digital and AI" into all training profiles.

What distinguishes these programs: they're not defensive "we're saving jobs" announcements. They're offensive investments in capability building -- with the assumption that AI changes work but doesn't eliminate it, and that organizations that manage the transition deliberately come out better than those waiting for the dust to settle.

I work with teams standing at this transition. What I see is more consistent than you might expect.

The Infrastructure-as-Code parallel universe applies here too. When IaC arrived in the industry, the same discussion emerged: will system administrators be replaced? The answer: no, but the role changed fundamentally. Those who used to provision servers now write Terraform and Ansible. The skills shifted -- from "I know the CLI commands" to "I can describe the system in code." Those who didn't make the transition weren't laid off -- but they were marginalized, yes. Those who did are more in demand than ever.

The same pattern is emerging for software development. Those who make the shift from code writing to spec writing, from implementer to quality validator, from individual contributor to agent orchestrator, change their profile -- not eliminate it. The value shifts: away from "who can type code fast", toward "who can say precisely what is wanted." That's an upgrade for domain expertise and systems thinking. Both are hard to automate.

What I observe in teams that enter the transition early: it's easier for those who were already good at specifying. Not necessarily formally -- the ability to think through a system completely, anticipate edge cases, formulate requirements leaving no room for interpretation -- that's the core skill of the Level 4 developer. And it's a skill that can be developed.

At Infralovers, we've increasingly aligned our training and coaching in recent months to this shift. Not because we were wrong before -- AI Coding Essentials was always more than prompt engineering. But because we see demand shifting: from "how do I use Cursor" to "how do I structure work so agents deliver reliably." That's the deeper question. And the answer lies in spec discipline, in holdout-set thinking, in the ability to encode domain knowledge in machine-readable form.

What gives me the most confidence about the DACH perspective isn't regulation alone, and it's not just the training structure -- it's the combination. Works councils force structured transition planning. The dual apprenticeship system provides the framework for supervised practice. And DACH engineering culture -- specification-first thinking, thoroughness, systems perspective -- is surprisingly well-prepared for Spec-Driven Development, when you frame it that way. The term is new. The thinking pattern isn't.

Concrete steps, not generic recommendations.

The Harvard study5 shows that the hiring decline is driven by freeze, not by layoffs. That's a deliberate decision, and it has consequences in three to five years. Those not hiring juniors today aren't building mentoring capacity today.

My assessment: it's worth redefining the junior role -- away from "handles boilerplate" toward "learns to specify precisely, encode domain knowledge, write scenarios." That's a higher-value entry job than before -- and one that produces the next generation of seniors who still have domain expertise.

The "PM-Developer" isn't self-executing. If you tell developers "use AI more" but don't update the role profile, evaluation criteria, and career paths, you get role confusion. Those evaluated on code output write code. Those meant to be evaluated on spec quality and agent output need explicit new criteria.

Concrete step: in the next performance review cycle, introduce an explicit area for "spec writing quality" and "agent orchestration effectiveness." That sounds bureaucratic. It's the signal-setting mechanism that communicates priorities.

For organizations in Germany and Austria, this is particularly concrete: organizations that plan AI transformation together with the works council have a process that holds afterward. Organizations that announce restructuring and then enter social plan negotiations lose time and trust.

Early means: before a concrete restructuring plan is on the table. A joint workshop on "what does AI change about our work" isn't an admission of weakness -- it's the foundation for a works agreement that enables AI adoption instead of blocking it.

AT&T invested roughly one billion dollars in a multi-year reskilling program ("Future Ready")30 and made upskilling a strategic core lever. The results were strong: over 180,000 of 203,000 employees participated, and half of all internal positions were filled with reskilled employees. But the time horizon -- seven to eight years from recognition to measurable results -- is key.

That means: those who start now will have scalable results by 2033. Those who wait until the pressure is undeniable start in 2028 and have scalable results in 2035. In an industry changing in 18-month cycles, that's a significant difference.

Deutsche Telekom, SAP, and Siemens have recognized this -- not because they're AI enthusiasts, but because their planning horizons for talent development are long enough that the seven-year horizon becomes visible in strategic calculations.

This is the third and final part of the series. What we've worked through across three posts:

Part 1 explained the mechanics -- why AI tools alone aren't enough, why the J-Curve is a transit stage, not an endpoint. Part 2 took apart the architecture -- how Spec-Driven Development, Digital Twins, and holdout-set thinking interact when agents write the code. This part asked the organizational question: what happens to the people inside it?

The answer: everything changes. Roles, metrics, career paths, the junior pipeline, the process framework. But "everything changes" is not a reason for panic -- it's a reason for planning. Organizations that plan explicitly early on -- rewriting roles, redefining junior training, involving works councils, building spec culture -- will be better positioned in two to three years than those waiting for the status quo to stabilize.

The seven-to-eight-year AT&T time horizon applies. The window for good planning is open now. It won't stay open forever.

Research, structure, argument, and all content decisions are mine. Text was produced in collaboration with Claude (Anthropic) -- the same tool I use in my own AI workflow at Level 4. Source research via Gemini, ChatGPT, and Claude; DACH-specific data research via a targeted research brief when the initial sources showed a geographic gap. Five rounds of fact-checking, manual verification of every individual source, external holdout check via GPTZero (0% plagiarism score -- citations verified throughout). Infographics generated with Gemini, reviewed against article content after each editing pass. All drafts, research briefs, and research results are version-controlled in Git.

The process this article describes -- write the spec, let the agent work, critically review the output -- is the same process used to write it. I describe the full workflow in AI-Assisted Knowledge Work: How I Am Rebuilding My Research and Writing Process.

McKinsey (2025). Unlocking the value of AI in software development. November 3, 2025. mckinsey.com ↩︎ ↩︎

InfoQ (2026). Spec-Driven Development: Architecture for the Agentic Era. January 12, 2026. infoq.com ↩︎

GitHub (2025). Spec-Driven Development with AI: Open Source Toolkit. September 2025. github.blog ↩︎

Google (2025). Build with Google Antigravity: Agentic Development Platform. November 2025. developers.googleblog.com ↩︎

Hosseini Maasoum, Seyed Mahdi & Lichtinger, Guy (2025). Generative AI as Seniority-Biased Technological Change: Evidence from U.S. Resume and Job Posting Data. August 2025. ssrn.com ↩︎ ↩︎ ↩︎

The Register (2025). UK tech grad hiring down 46%. October 2025. theregister.com ↩︎

High Fliers Research (2025). The Graduate Market in 2025. online.flippingbook.com ↩︎

FRED (2025). Software Developer Job Postings. BLS/Indeed. fred.stlouisfed.org ↩︎

Brynjolfsson, Chandar & Chen (2025). Canaries in the Coal Mine. November 2025. nber.org ↩︎

Indeed Hiring Lab Germany (2024). Jobs & Hiring Trends Report 2025. December 2024. hiringlab.org ↩︎

Kinder, Molly (2026). To save entry-level jobs from AI, look to the medical residency model. Brookings Institution, January 2026. brookings.edu ↩︎

WEF (2026). AI and young people / entry-level impact. Davos 2026. weforum.org ↩︎

ZEW Mannheim (2025). Employees Use AI Even Without Formal Introduction by Their Employers. Schlenker & Brüll. zew.de ↩︎

Bitkom (2025). IT Skills Shortage in Germany. Study, 855 companies. bitkom.org (PDF) ↩︎ ↩︎

Shi, Henry & Owyang, Jeremiah (2025). Lean AI Native Leaderboard. May 2025. leanaileaderboard.com ↩︎

Cursor (2025). Series D Announcement. November 2025. cursor.com ↩︎

SaaS Capital (2025). 2025 Revenue Per Employee Benchmarks for Private SaaS Companies. 14th annual survey, 1,000+ companies. saas-capital.com ↩︎

P3 Group (2025). From Sprints to Swarms: Navigating the Post-Agile Future. September 2025. p3-group.com ↩︎

Mollick, Ethan (2026). Management as AI Superpower. One Useful Thing, January 2026. oneusefulthing.org ↩︎

StrongDM (2026). Software Factory Manifesto. factory.strongdm.ai ↩︎

Shapiro, Dan (2026). The Five Levels: from Spicy Autocomplete to the Software Factory. January 23, 2026. danshapiro.com ↩︎

ArbG Hamburg (2024). Decision 24 BVGa 1/24. January 16, 2024. First German court ruling on AI and works council co-determination. landesrecht-hamburg.de ↩︎

CEDEFOP (2025). Germany: AI emerging as key VET competence. cedefop.europa.eu ↩︎

TRUMPF (2025). Apprenticeship start: TRUMPF trains AI professionals for the first time. September 2025. trumpf.com ↩︎

ICT-Berufsbildung Schweiz (2024). High satisfaction and rising apprenticeship numbers 2024 (+8.6%). ict-berufsbildung.ch ↩︎

WKO (2025). Labor Force Radar 2025 -- IT Professionals Austria. wko.at ↩︎

Deutsche Telekom (2024). Corporate Responsibility Report 2024. CEO Tim Höttges on AI training. telekom.com ↩︎

SAP (2026). Pledges to Equip 12 Million People with AI-Ready Skills by 2030. February 2026. news.sap.com ↩︎

Siemens (2025). 1,700 new apprentices with AI/digital focus. September 2025. news.europawire.eu ↩︎

CNBC (2018). AT&T's $1 billion gambit: Retraining nearly half its workforce for jobs of the future. March 2018. cnbc.com ↩︎

You are interested in our courses or you simply have a question that needs answering? You can contact us at anytime! We will do our best to answer all your questions.

Contact us