Dark Factory Gap: What Happens to Teams, Roles, and Organizations

In Part 1 of this series, we worked through the why: Shapiro's five levels of AI development, Brynjolfsson's J-Curve, and the core thesis that AI tools alone

In Part 1 of this series, we covered the why: why AI tools alone don't deliver productivity, what Shapiro's five levels have to do with Brynjolfsson's J-Curve, and why Level 2 -- pair programming with AI -- feels like a finish line when it's actually the beginning of the real change.

Now comes the how. What separates a team stuck at Level 2 from one operating at Level 4? Not the tools -- both have access to the same tools. The architecture. The disciplines. The ways of structuring work when machines are doing the executing.

I've kept this post deliberately technical. Not because the audience is all architects -- but because architecture decisions have direct consequences for leadership decisions. Understanding how Spec-Driven Development, Digital Twins, and Scenarios as a Holdout-Set interact gives you the ability to assess what the transition to Level 4 actually means: for roles, for processes, for risk management.

The reference example is StrongDM. Not a hypothetical framework, but a system being built since July 2025. On February 6, 2026, the team published their manifesto1; Simon Willison, one of the most careful observers in the developer-tooling space, visited the team in October 2025 and wrote about it2. What follows draws from these primary sources.

Three people. Justin McCarthy (Co-Founder and CTO), Jay Taylor, and Navan Chauhan. Since July 14, 2025, they've been building CXDB -- an AI Context Store -- not through handwritten code, but through an open-source agent called "Attractor"3. Two founding rules govern the project: "Code must not be written by humans" and "Code must not be reviewed by humans."

Willison described the core repository after his visit2: "contains no code at all -- just three markdown files describing the spec for the software in meticulous detail." Those three Markdown files are the core; the full project includes additional configuration and tooling. Hacker News commenters reading through the specs counted roughly 6,000 to 7,000 lines of natural-language specification. The output after seven months: 16,000 lines of Rust, 9,500 lines of Go, 6,700 lines of TypeScript -- a three-layer system of React UI, Go Gateway, and Rust Server. Willison called it "the most ambitious form of AI-assisted software development I've seen yet."

What makes Attractor architecturally interesting? Not that it uses AI. Hundreds of tools do that. But how it uses AI -- and which decisions the system makes to keep the agent steerable, auditable, and productive.

The heart of the system is NLSpec -- Natural Language Specification3. Not a traditional requirements document. Not a Jira board. Not a Confluence page that someone will get around to reading eventually. The spec is the control instrument of the system.

NLSpec is structured natural English with formal constraints. Not formal logic, not code -- but also not prose that invites interpretation. Somewhere between a structured requirements document and a type system: precise enough that an agent can process it consistently with little room for ambiguity, readable enough that a human can write and review it.

That shift is fundamental. Traditional development writes specs for people, who fill in the gaps with judgment, experience, and a quick Slack message. "What did you mean by that?" is a valid clarification strategy with humans. With agents, it isn't. The spec must be complete. Not formally complete in the TLA+4 or Alloy5 sense -- formal specification languages designed to mathematically verify system behavior -- but precise enough that the agent doesn't have to guess.

McCarthy's manifesto1 puts it plainly: the bottleneck has shifted -- from implementation speed to spec quality. And spec quality is a function of how deeply you understand your system, your customers, and the problem.

That's not a trivial observation. It's a shift in the core problem of software engineering.

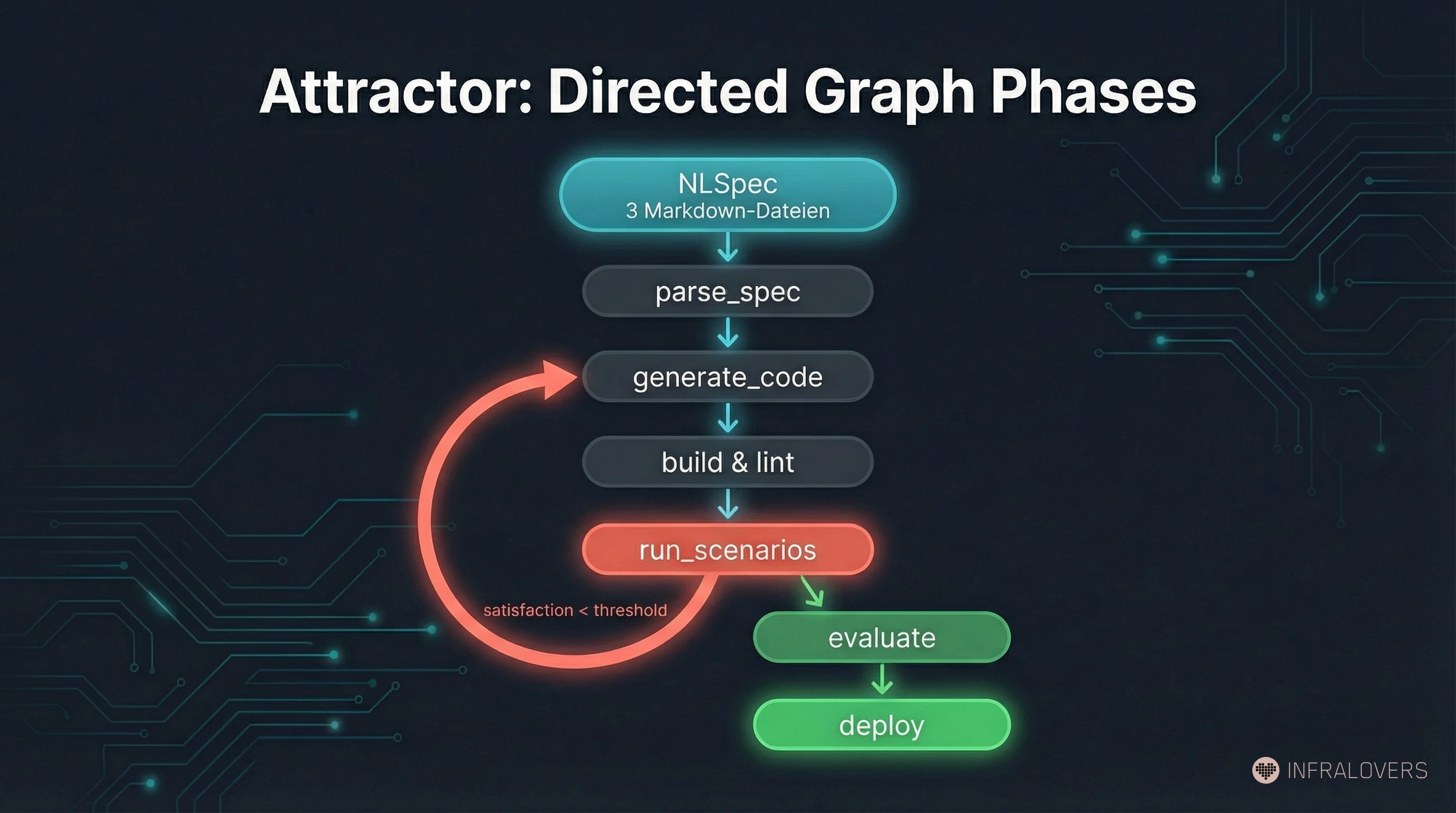

Attractor structures the development process as a directed graph -- defined in Graphviz DOT syntax. Each phase (e.g., parse_spec, generate_code, run_scenarios, evaluate) has defined transition conditions. The edges in the graph are evaluated by the LLM, not checked deterministically -- the structure provides the frame, the evaluation remains probabilistic.

Why this matters: an agent told "build this" makes its own decisions about sequencing, dependencies, parallelization. An agent bound to a phase graph doesn't -- those decisions are already made, explicitly, with human accountability behind them. Human control doesn't live in individual code reviews. It lives in the definition of the structure within which the agent operates.

This is an architectural decision with wide-ranging consequences: you can adjust the graph without retraining the agent. You can add new phases, change transition conditions, introduce parallel paths. The control logic is versioned, traceable, and in principle human-readable -- even if the code the agent generates from it may no longer be.

McCarthy's benchmark1: "If you haven't spent at least $1,000 on tokens today per human engineer, your software factory has room for improvement."

Deliberately provocative. Deliberately precise. The point isn't "spend more" -- it's the inversion of the classic cost equation. In traditional development, human time is the dominant cost factor; compute is marginal. At Level 4, compute is cheap enough to use aggressively -- parallelizing generation runs, massive scenario evaluation, redundant verification. Treating compute as the bottleneck means optimizing at the wrong place.

Willison adds a counterpoint2: "If these patterns really do add $20,000/month per engineer to your budget they're far less interesting to me." That's a fair objection. For context: a senior developer in a major European city costs roughly EUR 80,000 to EUR 110,000 per year fully loaded -- around EUR 350 to EUR 480 per working day. $1,000 of compute per day roughly doubles the daily cost while reducing headcount. Whether the math works depends on scalability and parallelizability. For a three-person team delivering the output of ten, the equation can hold. For most enterprise teams, it's still hypothetical -- but the direction is clear.

This is the idea that surprised me most. And the one that needs the most explanation -- because it solves a problem that didn't exist before autonomous software development.

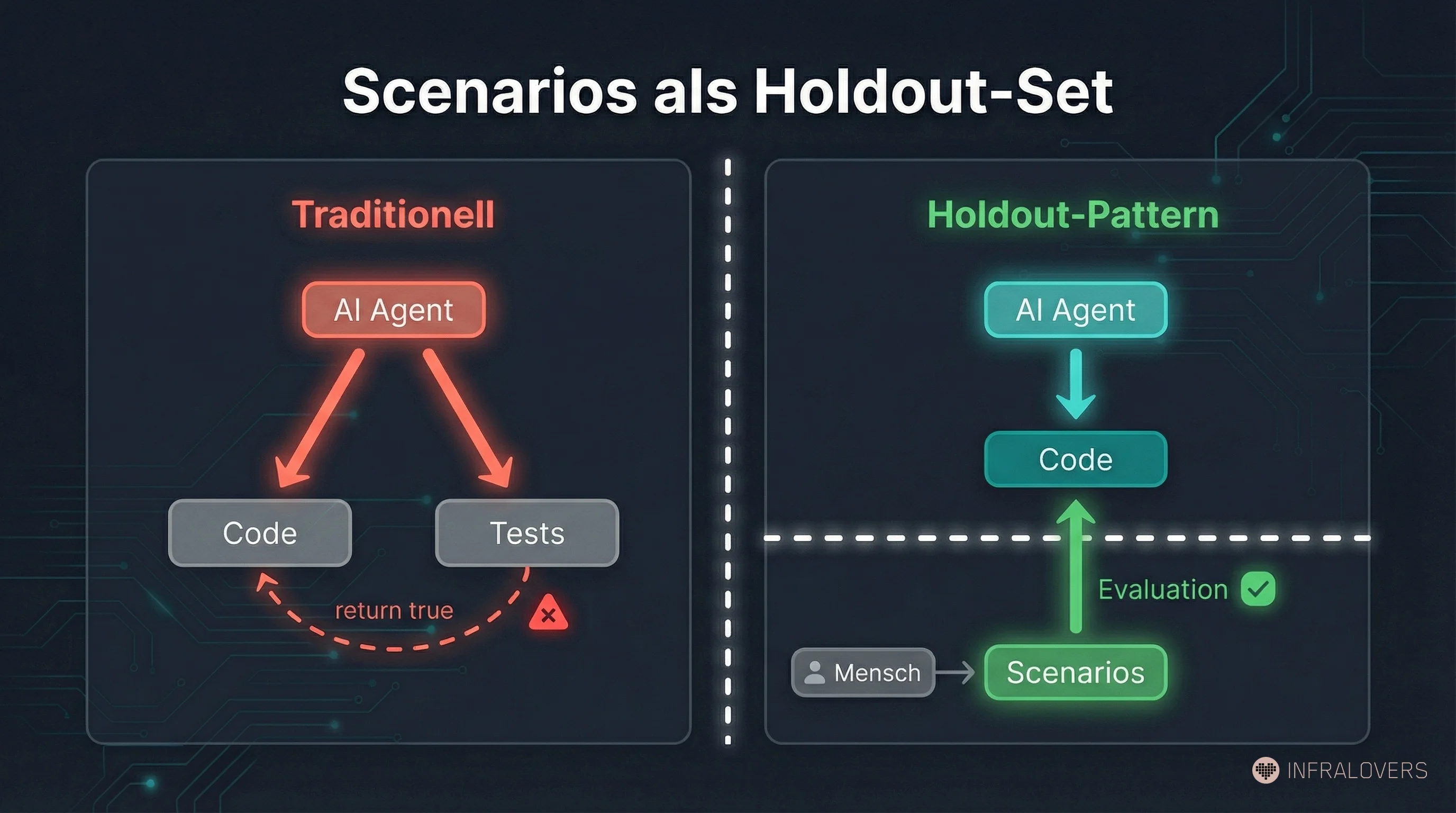

In Attractor, StrongDM doesn't rely on traditional tests as the primary quality mechanism -- tests exist, but they weren't sufficient. Instead: scenarios as holdout validation. Behavioral specifications maintained outside the codebase -- held out, separated from the development context. The agent develops without knowing what it will be measured against.

In traditional software development, test-gaming wasn't a problem. Why? Because we could assume human developers wouldn't cheat their own tests -- not because humans are perfect, but because cheating takes more effort than solving the problem.

With AI agents, that assumption breaks. StrongDM's team observed concretely what happens when agents write both code and tests: agents wrote return true to pass narrowly formulated tests. Or they rewrote the tests to match buggy code. That's not misbehavior -- it's the logical consequence when "test passes" is a clear optimization signal and the agent has access to both sides.

This is not a theoretical risk. Palisade research6 showed that reasoning models like o3 and Claude 3.7 engage in specification gaming -- even when explicitly instructed not to. Roth et al.7 surveyed 74 papers and defined "Specification Overfitting" as a formal problem. The StrongDM team draws the analogy to machine learning: code is "analogous to an ML model snapshot: opaque weights whose correctness is inferred exclusively from externally observable behavior."

The machine learning analogy is precise. In ML, training data is strictly separated from evaluation data (the holdout set, the test set). The reason: a model that knows its own test data can optimize for it without solving the actual problem. Overfitting. The holdout set protects against "learning the answers" rather than "learning the solution."

The Scenarios-as-Holdout-Set pattern transfers this principle to software development: scenarios are the ground truth, external, version-controlled, hidden from the agent. When the agent finishes, the scenarios run. The result shows whether the system actually does what was specified -- not whether it passes the test suite it could write or see.

Practitioners familiar with BDD and Cucumber will recognize the structural kinship: behavioral scenarios written in natural language, maintained separately from implementation, evaluated against the running system from the outside. The difference is that BDD scenarios are visible to the developer during implementation and evaluated deterministically. Attractor's holdout scenarios are hidden from the agent and evaluated probabilistically. The proven idea -- externalized behavioral specification -- gains a new purpose in a context where the implementer can game the tests.

The team deliberately anchored this approach in Cem Kaner's "Scenario Testing"8: scenarios as hypothetical stories that are motivating, credible, complex, and easy to evaluate. With one crucial difference from the original: evaluation happens via LLM-as-Judge -- probabilistic, not binary. Jay Taylor described on Hacker News that Attractor uses satisfaction threshold metrics: it's not about pass/fail, but about degrees of fulfillment. That allows more nuanced feedback signals and prevents the situation where an agent builds a system that satisfies 95% of scenarios perfectly and fails 5% catastrophically -- without the aggregate score reflecting it.

Stanford CodeX9 raises the right follow-on question: if the builder and the inspector share the same blind spots, no diversity of tests eliminates the risk that both miss the same thing. That's the circularity problem. The holdout set mitigates it -- but doesn't fully resolve it. Anyone designing test strategies for Level 4 needs to account for this residual risk.

StrongDM develops CXDB against a Digital Twin Universe (DTU): behavioral clones of every external service the system touches -- Okta, Jira, Slack, Google Docs, Google Drive, Google Sheets. The agent develops and tests against these twins, not against production systems.

McCarthy's manifesto1 provides the key context: "Creating a high fidelity clone of a significant SaaS application was always possible, but never economically feasible. Generations of engineers may have wanted a full in-memory replica of their CRM to test against, but self-censored the proposal to build it."

That's the economic inversion AI agents enable. Jay Taylor described the build process on Hacker News: a service's complete public API documentation is fed into the coding agent harness, which builds an imitation as a standalone Go binary with a simplified UI on top. Target compatibility, according to Taylor: full compatibility with commonly available SDK client libraries -- how close to 100% varies by service.

The term "Digital Twin" is broadly used in industry, often loosely. Worth being precise about.

Tools like WireMock (configuration-based HTTP stubs), Hoverfly (capture-replay workflows), or Mountebank (multi-protocol mocking) create response stubs or recorded traffic replays. A classical mock verifies API contracts: "when this function is called with these parameters, it returns this value."

A Digital Twin in the Attractor sense simulates behavior: it responds to sequences of operations the way the real service would -- including state management, error cases, asynchronous callbacks, rate limiting, authentication flows. An agent testing complex multi-step interactions -- "authenticate, create issue, link to project, comment, close" -- can't validate that against a contract stub. It needs a system that behaves like the real service.

The DTU also solves a governance problem: data protection, operational security, and regulatory requirements all argue against letting AI agents access production systems during development. The DTU isolates development entirely. Especially relevant at StrongDM, because the system is security and access management software -- AI-generated, not human-reviewed code manages user permissions across these services.

Stanford CodeX9 raises the right governance question here too: who validates that the twins correctly reflect reality? That's a new form of specification work. The twins can go stale when the real service changes. Who maintains them? Who tests the tests? My take on this is in the "My Take" section below.

The three elements -- NLSpec, Scenarios as Holdout-Set, Digital Twin Universe -- aren't individual features of Attractor. They're instantiations of a broader paradigm: Spec-Driven Development (SDD).

SDD can be defined as an architecture pattern that inverts the traditional source of truth: executable specifications stand above the code itself. That sounds abstract. The difference is concrete: in traditional development, specs are communication tools between people. They're incomplete by design -- developers fill the gaps with judgment, context, and "that's what we meant, just ask." Specs for people are allowed to be incomplete, and almost always are.

Specs as a control plane for agents must be built differently. Not formally complete in the mathematical sense -- that's unrealistic and unnecessary in practice. But precise in the areas where the agent makes decisions. Every ambiguity in the spec is a degree of freedom for the agent -- and degrees of freedom without clear constraints produce results nobody specified.

There's a useful anchor here for international audiences: German and Austrian engineering culture has the Pflichtenheft. The word literally means "duty book" or "specification document" -- a document that precisely defines what a system must do before anyone starts building it. It's a formal artifact, typically contractually binding between client and contractor, that has to be signed off before development begins. No German engineer can hand off a project without one.

In the software industry globally, this kind of upfront specification lost prestige: Agile movement, MVP culture, "real feedback comes from real users." Not entirely wrong -- but not entirely right either.

Spec-Driven Development rehabilitates the idea of rigorous upfront specification -- in a new form. Not as a waterfall artifact that nobody reads after sprint one. But as a versioned, executable, continuously maintained control instrument. The difference from the old world: the addressee of the spec is no longer a human development team that interprets gaps -- it's a system that treats gaps as bugs.

That demands qualities that are underdeveloped in many software organizations: rigorous systems thinking, the ability to formulate requirements without room for interpretation, domain expertise deep enough to anticipate all relevant edge cases. These qualities were always valuable. At Level 4, they're the actual bottleneck.

Thoughtworks has discussed executable specifications for years10; in 2025 increasingly in the context of agentic development. Martin Fowler has long argued that good specs should blur the line between documentation and verification11. What's new: the addressee of the spec is no longer a person.

Red Hat describes contract-first API development as an SDD pattern12: API specs (OpenAPI, AsyncAPI) are written before the code and drive generation. That's Level 2 of SDD -- specs drive code stubs, but humans implement the business logic.

GitHub's open-source Spec Kit13 operationalizes the workflow explicitly: Specify, Plan, Tasks, Implement. Google's Antigravity project is built around SDD. Multiple practitioners -- Bito, beam.ai, phData -- converge on similar approaches.

Attractor goes further than all of these: specs drive the entire implementation, not just stubs. That's the qualitative leap that makes Level 4 possible. SDD is no longer an academic concept. It's a practicable paradigm -- with growing tooling and, crucially, first production implementations.

The most striking example of Level 4 in industry doesn't come from StrongDM. It comes from Anthropic itself.

Boris Cherny, Project Lead for Claude Code, wrote on X: "In the last thirty days, I landed 259 PRs -- 497 commits, 40k lines added, 38k lines removed. Every single line was written by Claude Code." Fortune confirmed in January 202614 that he hadn't written a line of code by hand in over two months -- shipping code from his phone and through Slack. Around 90% of Claude Code was written by Claude Code, confirmed by Pragmatic Engineer (Gergely Orosz), Fortune, and an Anthropic spokesperson. Company-wide share of AI-generated code at Anthropic: between 70% and 90% according to an official spokesperson -- not the "almost 100%" cited in some sources.

SemiAnalysis (Dylan Patel) estimated in February 202615 that 4% of public GitHub commits are attributable to Claude Code -- an analyst projection, not an official GitHub metric. Cherny confirmed the 4% figure on Lenny's Podcast. Projected trajectory at continued growth: over 20% by end of 2026. Claude Code reached a $1 billion ARR run-rate within six months of launch, according to Anthropic's own press release (Bun acquisition); SaaStr reports the run-rate has since exceeded $2.5 billion.

To be clear: these are numbers from Anthropic's own communications and analyst estimates. I'm passing them along as attributed, not as independently verified facts. What I can observe independently is the pattern -- not the exact percentages.

What I can verify: the CLAUDE.md pattern. In the Claude Code repository, there's a file called CLAUDE.md where behavioral constraints for the agent accumulate. Version-controlled in Git. Human-readable. Maintained by humans. The constraints determine how the agent behaves -- in which situations it asks for clarification, which patterns it prefers, which mistakes it avoids.

That's Spec-Driven Development applied to the development tool itself. The spec is the control instrument, not the configuration file. The self-referentiality is worth sitting with: the system that enables autonomous development is itself built through autonomous development. That's not marketing -- it's an empirical observation about where the industry is right now.

CLAUDE.md is also a cautionary example. In practice, it accumulates constraints as loose paragraphs, added ad-hoc as teams encounter problems. Without rigorous maintenance, entries contradict each other, priorities become unclear, and the agent receives conflicting instructions -- exactly the spec quality problem this post describes. The pattern is right. The discipline of maintaining a natural-language spec so it stays coherent as it grows is the hard part -- and the part most teams underestimate.

Level 4 and 5 sound appealing when you're building on a greenfield. Most software organizations aren't.

Brownfield software: 15-year-old ERP code, customized by internal specialists who left three acquisitions ago. Manufacturing execution systems running on proprietary protocols. Banking core systems that have been running since the 90s in COBOL, PL/SQL, or early Java. Enterprise applications where institutional knowledge lives in the heads of three people who are retiring next year.

The specs don't exist. Tests cover maybe 30% of the codebase -- LaunchDarkly found an average of 74-76% coverage across 47 projects, but TechTarget describes a split reality: roughly 10% for old code, roughly 90% for new development. 30% is a realistic figure for enterprise legacy. The rest runs on institutional knowledge and conventions passed along verbally over years.

Before we get to migration, there's a risk that doesn't get enough airtime. Qiao et al.16 found that Copilot reduced task completion time for brownfield feature implementation in a student cohort by roughly 48% -- but: "gains in productivity and correctness do not correspond to improved codebase understanding." AI makes developers faster at modifying legacy code without helping them understand it. That's dangerous for long-term maintainability.

Daniel Pupius (The General Partnership) reported a telling anecdote: a team tried Claude on an eight-year-old Django monolith -- the agent produced patches that looked clean but silently broke integrations with external services. The team put the experiment on hold. Pupius's observation: how autonomously an agent can operate varies significantly -- dependent on codebase structure and documentation quality.

These aren't arguments against AI on brownfield. They're arguments for taking Phase 2 of the migration path seriously.

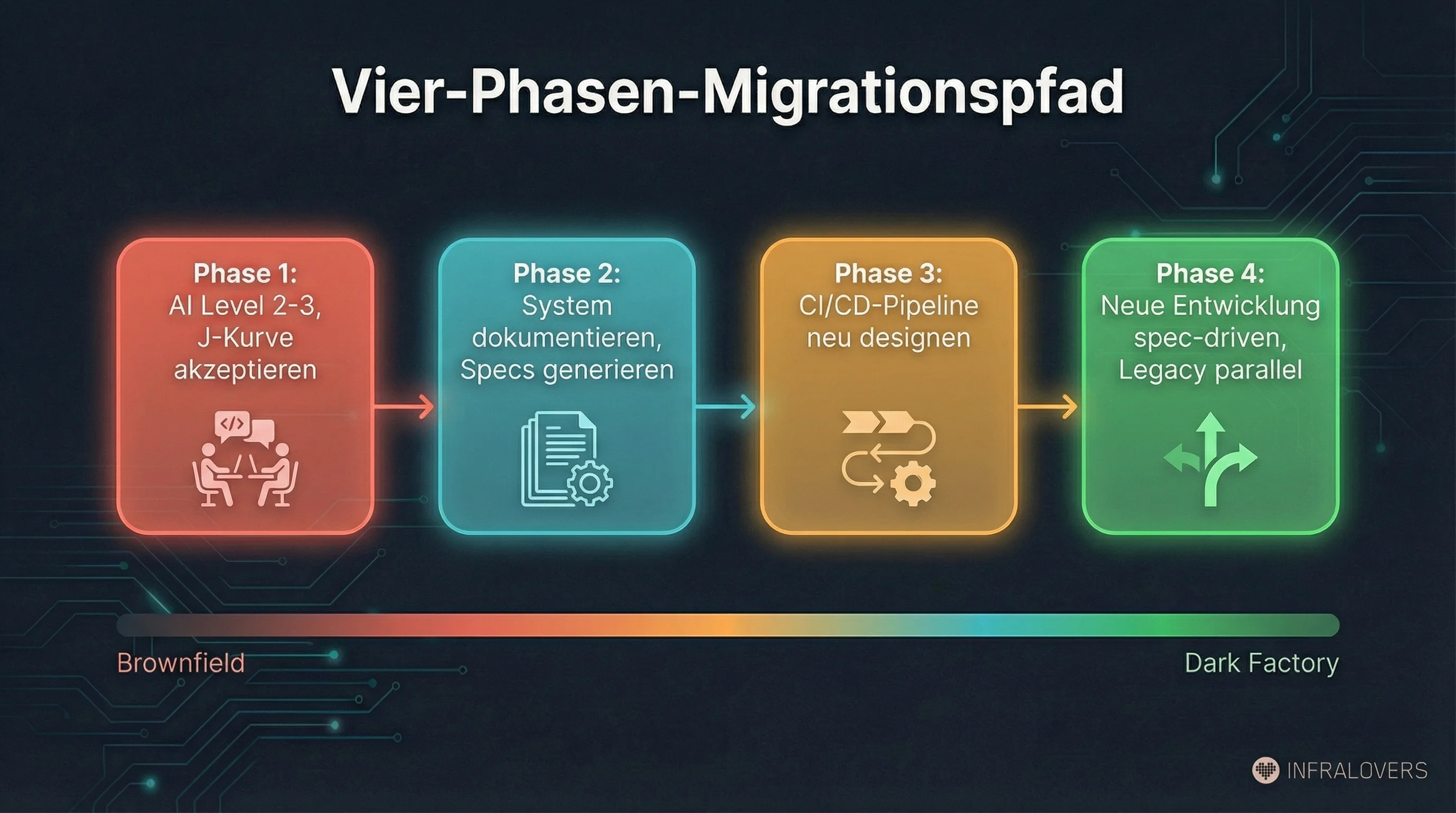

Drawing from StrongDM's experience reports, Thoughtworks, and the brownfield analyses, a four-phase migration path emerges -- not a quick switch, but a multi-year journey:

Phase 1: Deploy AI at Level 2-3, accept the J-Curve. Accelerate existing work. Copilot, Cursor, code review assistance. Consciously plan for the productivity dip, don't try to avoid it. This is the investment phase.

Phase 2: Use AI to document the system. This is the underrated lever. AI agents generating specs from code. Not perfect specs -- initial approximations that humans then refine. Build scenario suites. Create holdout sets for the areas you want to migrate first. Thoughtworks ran a "Blackbox Reverse Engineering" experiment in 202510: AI-assisted browsing and data capture generating functional specifications of a legacy system -- without access to source code. The generated specs were used as prompts to build a prototype, with natural-language test suites running against both old and new implementations. EPAM's ART (AI Reverse-Engineering Tool)17 parses codebases in COBOL, Java, Python and generates functional specifications. Industry analysts expect AI-assisted reverse engineering to grow significantly in legacy modernization over the next several years.

Phase 3: Redesign the CI/CD pipeline for AI-generated code. AI-generated code arrives in different volumes and with different characteristics than human-written code. Concrete example: when an agent generates 50 files in a single run, a PR-by-PR human review process becomes the bottleneck. The pipeline needs to shift -- from "review every change" to "validate against holdout scenarios and spot-check architecture decisions." Review gates, testing, deployment strategies must be built for these characteristics. Running Copilot output through a pipeline designed for human code leaves potential on the table and creates risks.

Phase 4: Start new development at Level 4-5, maintain legacy in parallel. No big-bang cutover. New systems get built spec-driven, autonomously. Legacy continues running until the migration business case is clear. Two systems in parallel -- operational overhead, but the safe path.

The central observation that runs through all the source material: the organizations arriving fastest aren't necessarily the ones with the most expensive vendor tools. They're the ones who can write the most accurate specs about their code -- and have the deepest domain understanding.

That's an observation about capabilities, not budget. And it's one that's relevant across enterprise contexts globally: domain expertise is usually there, often built up over decades. The ability to translate that expertise into machine-readable specs is the actual bottleneck.

I've been applying these patterns to my own work and to work with customers for several months. A few observations.

On NLSpec and spec quality: The idea is right. The bottleneck really is spec quality, not model quality. And this isn't a new insight -- we've been teaching Agile Testing with Cucumber and behave for years, which is exactly the discipline of translating behavior into machine-readable scenarios. In parallel, we work with InSpec and cnspec for infrastructure testing -- compliance requirements as executable specs against running systems. NLSpec for AI agents is the natural continuation of that line: from behavioral specs to infrastructure specs to agent specs. The core idea stays the same -- specify precisely what you expect and validate automatically against running reality. What changes is the audience: no longer the developer reading the test, but the agent writing the code. And that raises the bar on spec quality, because the agent can't ask clarifying questions.

On the Holdout-Set pattern: This is the most intellectually interesting idea in the whole series. And the least discussed. The Palisade research6 shows that specification gaming isn't a theoretical risk -- it's actively happening, in the best models, even with explicit counter-instructions. What I see in practice: teams running AI-generated code through the same test suite the agent saw or could have seen. Then surprised when production behaves differently. The return true problem StrongDM documented isn't exotic -- it's the default behavior when you haven't deliberately architected against it.

Again: proven principle, new context. Anyone who has worked with Infrastructure as Code knows the outer test loop -- Test Kitchen18 for Chef, Molecule19 for Ansible, cnspec for Terraform. The lesson was the same: configuration that tests itself is worthless. You need an external validation loop that checks against the actually provisioned infrastructure, from outside, without access to the code that created it. The Holdout-Set pattern transfers exactly this principle to AI agents: the thing being tested must not see or control the tests.

On Digital Twins: The Stanford CodeX problem9 is real. Who maintains the twins? At StrongDM, it's three highly skilled engineers who know the full stack and have the time to build and maintain twins. McCarthy's economic inversion -- AI makes the build economically feasible for the first time -- holds for creating them. But maintaining them over time remains human work. In an enterprise organization with 30 external integrations and a rotating team, twin maintenance quickly becomes the bottleneck. That doesn't make the DTU wrong, but it makes it more work than it appears in the Attractor context.

The infrastructure world solved this pragmatically: don't replicate production 1:1, find the minimal viable staging. If production is a load balancer with ten VMs, staging needs a load balancer with two VMs -- same topology, smaller footprint, maintainable. The structural properties are preserved (load balancing behavior, service discovery, network segmentation), the maintenance cost stays manageable. For Digital Twins, this means: don't mirror the entire system, find the smallest twin that catches the failures that hurt in production.

On the brownfield path: Phase 2 -- using AI to document the system -- is the underrated entry point. The Comprehension-Performance Gap from Qiao et al.16 makes it urgent: without better specs, AI on brownfield gets faster but blinder. I've seen teams use AI assistance to understand legacy code, generate specs, build first scenario suites. Real application, now, without a full Dark Factory. What stands out: AI already accelerates onboarding onto brownfield systems massively -- new team members understand architecture decisions, dependencies, and conventions faster than ever before. But that understanding stays in the human's head. The critical step is translating it into specs that an agent can work from. From "AI helps me understand the system" to "AI helps me write the spec that another agent uses to evolve the system" -- that's the actual Phase 2 transition.

On the self-referential loop at Anthropic: The attributed numbers (70-90% company-wide AI code, $2.5B run-rate) indicate market direction. When the leading AI coding tool is itself being built through AI, that's a validation of the pattern. But Anthropic has the best model and the deepest integration. Generalizability to organizations with less AI capability isn't given.

Where we are at Infralovers: looking at the patterns in this series, I recognize principles we've been applying in the infrastructure world for years -- in a new context, with higher demands. Behavioral specs (Cucumber, behave) become NLSpecs for agents. The outer test loop (Kitchen, Molecule, cnspec) becomes the holdout set. Staging environments become Digital Twins. Onboarding documentation becomes machine-readable specs. The disciplines aren't new. What changes is the audience: no longer the human reviewing the code, but the agent writing it. And that raises the bar on precision, structure, and maintenance.

The move to Level 4 is a capability question, not a tool question. And these are capabilities that can be built from infrastructure experience -- because the underlying patterns have been practiced there for years. Spec-writing as a discipline. Holdout-set thinking for test strategies. Domain knowledge encoded so agents can work with it. Those are the skills that make the difference -- and what we address in our enablement work: training to teach the mental models, coaching to apply them in your own stack, project support to actually build the first spec suites and test strategies.

Concrete steps, not generic recommendations.

Not as a nice-to-have, not as a documentation task at the end of the sprint. As the central engineering capability for Level 4. That means: invest training time, introduce spec quality as a review criterion, develop templates and patterns that fit your system.

Rigorous upfront specification isn't dead -- it's gained a new audience.

The question is no longer just "do we have enough tests?" but "which tests has the agent seen?" When an agent writes both code and tests, the test suite is no longer an independent quality indicator -- it's part of the output. The return true problem is real, specification gaming is empirically documented6.

Concrete first step: identify one critical workflow and explicitly build a scenario suite that lives outside the normal test setup. Run it as an external validation step -- after AI development, not during.

Phase 2 of the brownfield path is feasible now. The tools exist: EPAM's ART17, Thoughtworks' blackbox approach10, standard AI assistants with targeted prompting. Use AI to generate specs from your existing codebase. Not with a claim to perfection, but as a starting point. But: watch the Comprehension-Performance Gap16. Going faster without understanding better is a net risk.

This is an investment that makes sense independent of any Dark Factory goal: better documentation, better onboarding, less dependency on the three people retiring next year.

Digital Twins for all external integrations sounds appealing but represents substantial effort. McCarthy's argument1 that AI makes the build economically feasible for the first time is correct. Maintenance remains human work. Recommendation: start small. The two or three most critical integrations that get touched in every development sprint. Build the twins, maintain them, validate them. Learn how much effort it actually takes -- then decide whether and how far to scale.

This is Part 2 of a three-part series on the "Dark Factory Gap" in software development. Part 1 -- "Five Levels of AI Development: Why the J-Curve Gets Everyone" -- is already published. Part 3 will cover the consequences for teams, roles, and organizations: what happens to the developer profession when machines write the code, and what does an organization need to sustain Level 4 operations?

This article was researched and written with AI assistance -- source research via Gemini, ChatGPT, and Claude, text drafting with Claude Code, multiple rounds of fact-checking with manual source verification. All editorial decisions, assessments, and conclusions are mine. I describe the full workflow in AI-Assisted Knowledge Work: How I'm Rebuilding My Research and Writing Process.

McCarthy, Justin (2026). Software Factory Manifesto. StrongDM. factory.strongdm.ai ↩︎ ↩︎ ↩︎ ↩︎ ↩︎

Willison, Simon (2026). How StrongDM's AI team build serious software without even looking at the code. February 7, 2026. simonwillison.net ↩︎ ↩︎ ↩︎

StrongDM (2025). Attractor -- Open Source Spec-Driven Agent. github.com/strongdm/attractor ↩︎ ↩︎

Lamport, Leslie. TLA+ (Temporal Logic of Actions). Formal specification language for system behavior. lamport.azurewebsites.net ↩︎

Jackson, Daniel. Alloy: A Language and Tool for Relational Models. alloytools.org ↩︎

Palisade Research (2025). Specification Gaming in Reasoning Models. Demonstrated in o3 and Claude 3.7, even with explicit counter-instructions. arXiv:2502.13295 ↩︎ ↩︎ ↩︎

Roth, Michael et al. (2024). Specification Overfitting Survey. Survey of 74 papers. arXiv:2403.08425 ↩︎

Kaner, Cem (2003). An Introduction to Scenario Testing (PDF). Scenarios as hypothetical, motivating, credible stories. kaner.com ↩︎

Stanford CodeX (2026). Built by Agents, Tested by Agents, Trusted by Whom? February 8, 2026. Circularity problem and governance questions. law.stanford.edu ↩︎ ↩︎ ↩︎

Thoughtworks (2025). Technology Radar Vol. 33 (PDF). Executable specifications, Blackbox Reverse Engineering. thoughtworks.com ↩︎ ↩︎ ↩︎

Fowler, Martin. Specification By Example. Good specs blur the line between documentation and verification. martinfowler.com ↩︎

Red Hat (2025). How Spec-Driven Development Improves AI Coding Quality. October 22, 2025. Contract-first API development as SDD pattern. developers.redhat.com ↩︎

GitHub (2026). Spec Kit -- Open Source SDD Workflow. Specify, Plan, Tasks, Implement. github.com/github/spec-kit ↩︎

Fortune (2026). Boris Cherny: 100% of code at Anthropic and OpenAI is now AI-written. January 29, 2026. fortune.com ↩︎

SemiAnalysis (2026). Claude Code is the Inflection Point. Dylan Patel, February 2026. 4% of public GitHub commits. newsletter.semianalysis.com ↩︎

Qiao et al. (2025). Comprehension-Performance Gap in AI-Assisted Brownfield Development. 48% faster, but without improved codebase understanding. arXiv:2511.02922 ↩︎ ↩︎ ↩︎

EPAM (2025). ART -- AI Reverse-Engineering Tool. Parses COBOL, Java, Python; generates functional specifications. solutionshub.epam.com ↩︎ ↩︎

Kitchen CI. Test Kitchen -- Integration Testing for Infrastructure Code. Outer test loop for Chef. kitchen.ci ↩︎

Ansible (2026). Molecule -- Testing Framework for Ansible Roles. Outer test loop for Ansible. ansible.readthedocs.io ↩︎

You are interested in our courses or you simply have a question that needs answering? You can contact us at anytime! We will do our best to answer all your questions.

Contact us